Operators Are the Missing Role in AI Agent Design

Everyone is racing to build autonomous agents. Almost nobody is designing the human role that keeps those agents from drifting, lying, or quietly burning money in a corner. That role has a name, and it is not Prompt Engineer. It is Operator: the dedicated, specially trained human who knows one specific agent the way a factory operator knows their line. It is the most underrated job in AI right now, and it decides whether your agents ship value or ship excuses.

From Build to Operate

Software used to come in two roles separated by a wall.

Engineers wrote the code. When it was “done,” they tossed it over the wall to Ops, who kept it alive in production while the engineers moved on to the next thing. Two roles. One direction. Heavy walls.

Then DevOps arrived and the wall came down. Engineers learned to deploy, monitor, and operate the things they built. But the primary activity was still upstream: design, code, ship. Operating was a tax on engineering, not the work itself.

Agentic systems break the pattern. The artifact is no longer a static piece of code that runs the same way every time. The artifact is a decision-making process. It judges, drafts, calls tools, fails in new ways every hour, and must be supervised in motion. The center of gravity moves from build to operate.

Engineering is no longer the primary activity. Operating is.

Each Agent Needs Its Own Operator

An AI agent is a factory line. The model is the machine. The prompts, tools, and guardrails are the cells. And every factory line in human history has needed an Operator.

Not a generic “automation person.” An operator trained for that line, who knows its quirks, its failure modes, the smell of trouble before the alarms fire. You don’t staff Toyota’s Takaoka plant with someone who skimmed an O’Reilly book. You staff it with operators who have run that line for years.

Agents are no different. Each agent needs a dedicated, specially trained Operator who knows that agent’s workings the way a factory operator knows the line. Not the model. Not the framework. That agent. Its prompts. Its tools. Its eval rubric. Its known failure modes. Its blast radius.

This applies whether you are an engineer operating an agent inside your own IDE, a support lead running a customer-facing AI in production, or a Factory Architect overseeing a fleet of them. Same role. Same discipline. Same skill ladder.

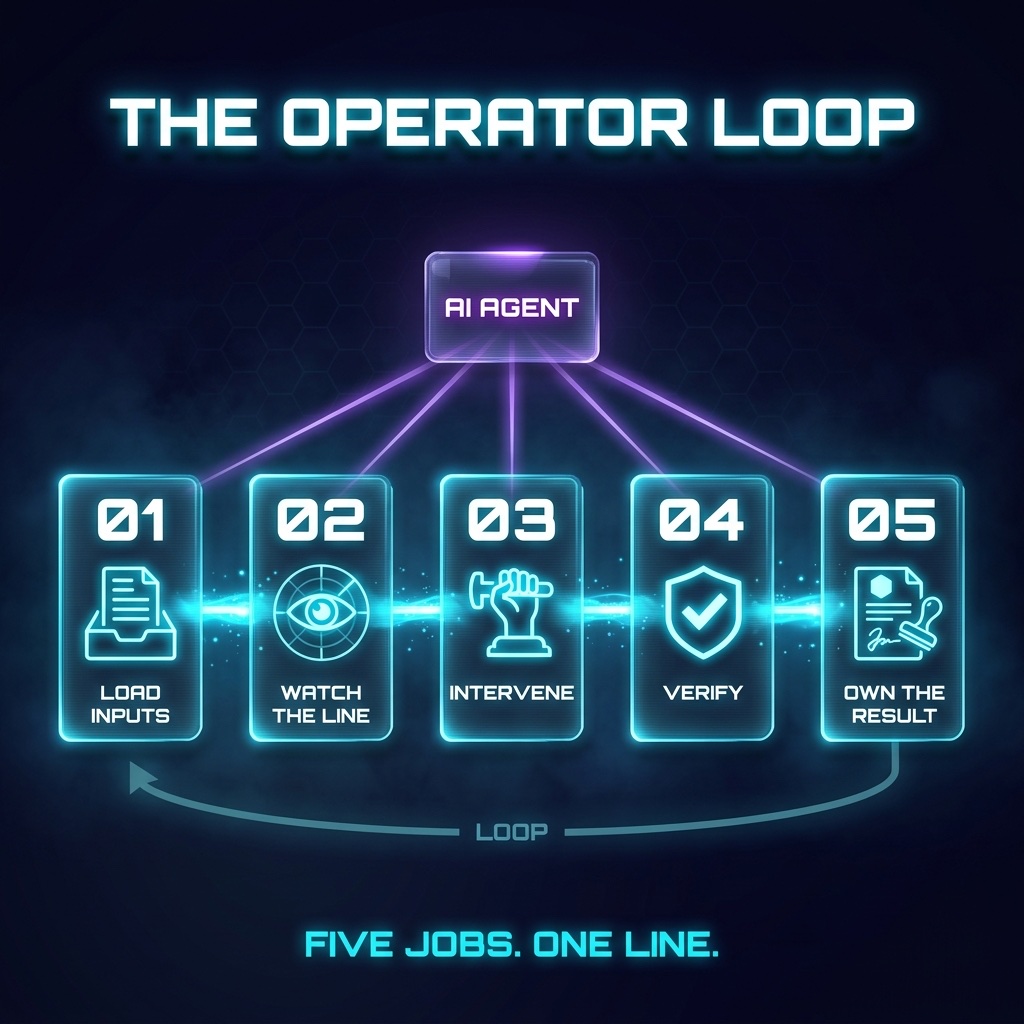

The Operator Has Five Jobs

The Operator job is not a vibe. It is five concrete responsibilities, repeated every run.

- Load Inputs. Curate the context the agent sees. Specs, examples, tools, prior runs, eval data. Garbage in is the most common failure mode for production agents, and it is the Operator’s failure, not the model’s.

- Watch the Line. Stream the agent’s behavior live. Tool calls, intermediate outputs, latency, cost, retries. If you cannot see what the agent is doing while it is doing it, you are not operating. You are praying.

- Intervene. When the line drifts, stop it. Steer it. Inject a correction, kill the run, swap the tool, escalate the input. The Flare Gun is for genuine unknowns. Most interventions are an Operator catching a known failure on its way to the floor.

- Verify. Nothing ships without an Operator-level check. Eval scores, spot reviews, structured signoff. The Operator is the last clean signal before the work becomes the company’s.

- Own the Result. When the agent ships garbage, the Operator owns it. When it ships gold, the Operator owns the line that produced it. No “the model did it.” The Operator did it, with the model.

These are not soft skills. They are a job, with artifacts: dashboards, run logs, eval suites, intervention playbooks, post-incident reviews. They have a career ladder. They will have certifications within two years.

Bad Workflow vs. Operator-Designed Workflow

A bad agent workflow looks like this. Someone fires a prompt, the agent does whatever it does for forty minutes, the human comes back, glances at the output, and ships it. No monitoring. No verification. No accountability. When something blows up in production a week later, everyone shrugs at the model.

An Operator-designed workflow looks like this. The Operator loads the spec, the eval set, and the relevant tool registry. They run the agent on a side branch with a live trace open. When the agent picks the wrong tool at step three, they pause, swap the tool, and resume. When the draft comes back, they run the eval suite, spot-check the top three risks, and either approve the artifact or send it back with concrete feedback. The run is logged. The intervention is logged. The signoff is logged. Every artifact has a name on it.

Same agent. Same model. Two completely different production systems. One ships value. The other ships excuses.

The Next Discipline Is Operator Design

The next discipline in AI Agent Design is not prompt engineering. Prompts are a tactic. Operating is a role. It is the job that determines whether your agents become a competitive moat or a compliance disaster.

Stop hiring Prompt Engineers. Start training Operators.

If you are building an agent today, name the Operator before you name the model. Decide what they will see, what they can intervene on, what they must verify, and what they own. Wire the system around them, not around the LLM.

No agent ships without an Operator. No Operator ships without a line.

That is the next factory.