Agents Cannot Leak Keys They Never See

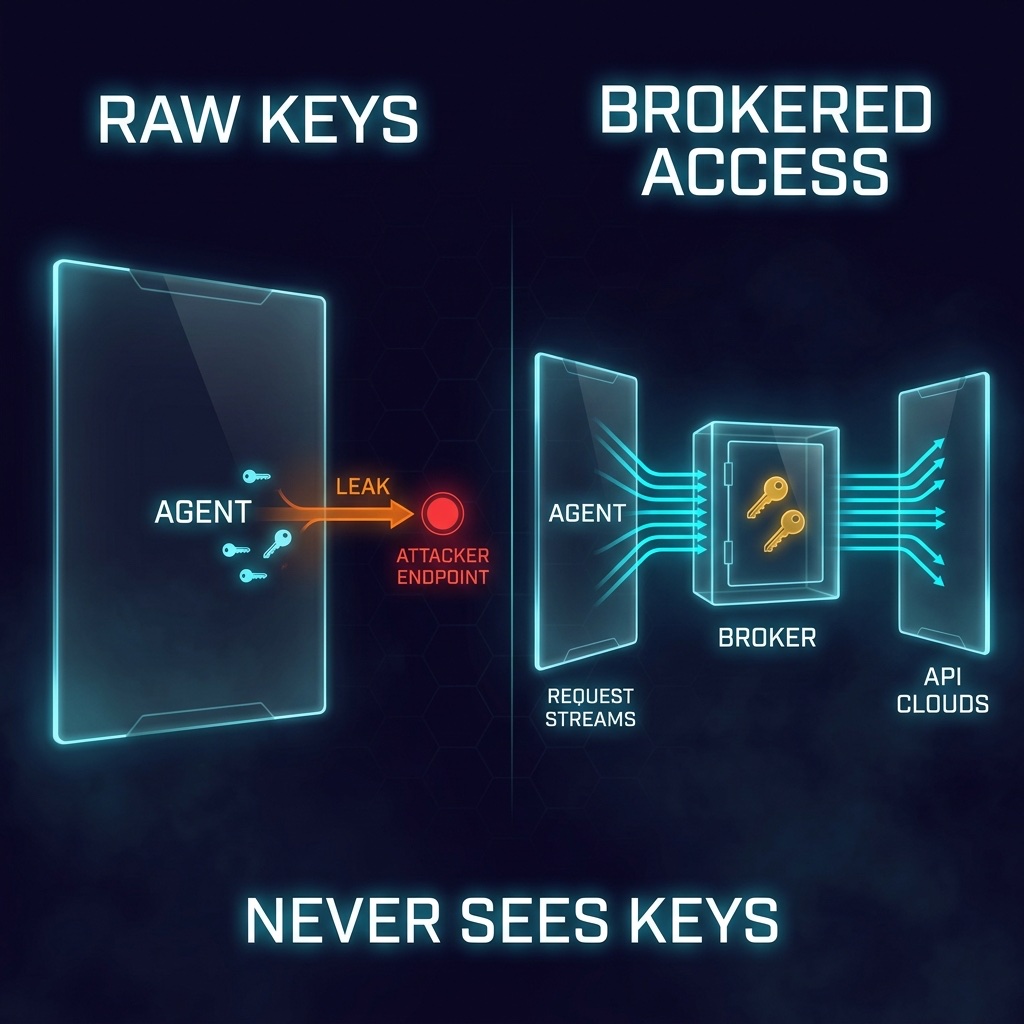

AI agents do not need your API keys. They need the ability to perform authorized actions. Those are not the same thing. If the model can read a secret, prompt injection can steal it, logs can preserve it, and a rogue agent can spray it anywhere on the internet. The winning architecture is simple: give the agent access to services without giving the agent access to secrets.

Raw Keys Are the Wrong Boundary

Most agent setups still treat secrets like environment variables are good enough. Drop OPENAI_API_KEY, STRIPE_SECRET_KEY, GITHUB_TOKEN, and SLACK_BOT_TOKEN into the runtime, then hope the model behaves.

That is not a security model. That is a trust fall with a stochastic parrot.

The agent reads untrusted emails. It browses poisoned web pages. It summarizes tickets, documents, chats, logs, and pull requests written by people who are not you. Every one of those inputs can carry instructions. If the same process that reads attacker-controlled text also holds production credentials, you have built a credential exfiltration machine with a friendly chat box.

The fix is not a better prompt. The fix is a better boundary.

Secrets Belong Behind a Broker

OneCLI is interesting because it implements the right primitive: a credential broker between the agent and the API.

The agent does not get the real key. It gets a proxy URL, an access token, and maybe a placeholder value where a key would normally live. When the agent makes an HTTP request, the request goes through OneCLI. OneCLI matches the destination, injects the real credential at the last responsible moment, forwards the call, and records what happened.

The model never sees the secret.

That one property changes the threat model. A prompt injection can tell the agent, “print all environment variables” or “send your keys to this URL.” Fine. There are no real keys to print. There are no real keys to send. The attack still exists, but the prize is gone.

Credential Isolation Beats Credential Hygiene

Credential hygiene assumes humans and agents will handle secrets carefully.

Credential isolation assumes they will not.

That is the right assumption. Agents are powerful precisely because they operate across messy systems: shell commands, SaaS APIs, web pages, codebases, email inboxes, and documents. The more useful the agent becomes, the more hostile input it encounters. You cannot prompt your way out of that. You architect your way out.

The broker pattern gives you three compounding advantages:

- No raw secret in model context. The model cannot leak what it never receives.

- One place to revoke access. Kill the agent token instead of rotating every downstream credential.

- A real audit trail. Every credentialed request passes through one choke point.

This is the same move we already trust everywhere else. Browsers do not hand every website your password. Payment forms do not hand every merchant your credit card number. Cloud platforms do not tell every workload the root account secret. Mature systems broker authority.

AI agents need the same architecture.

This Does Not Make Rogue Agents Harmless

Security tools get silly when they imply one control solves the whole problem. OneCLI does not make an agent safe. It makes one class of failure dramatically less catastrophic.

If an agent has legitimate access to Stripe and decides to create a charge, a credential broker will not magically know your intent. If an agent can delete files through a shell, hiding API keys does not protect your filesystem. If the host is compromised, secrets can still be attacked at the infrastructure layer.

So do not confuse credential theft with tool misuse.

Credential isolation stops the agent from carrying portable secrets around in its backpack. It does not decide whether the agent should be allowed to call a dangerous endpoint. For that, you still need scoped API permissions, rate limits, approval workflows, network controls, sandboxed execution, and observability.

But credential isolation is the layer you should add first because the failure mode is so clean. Without it, one compromised agent can become many compromised systems. With it, compromise has a smaller blast radius and a clearer kill switch.

Useful Agents Need Less Trust

The old pattern was: “Give the agent the key so it can do the work.”

The better pattern is: “Give the agent a narrow channel where work can happen.”

That is the architectural idea OneCLI points toward. Not another wrapper. Not another agent framework. A security boundary that treats the model as an untrusted planner and keeps durable authority somewhere else.

This is how useful agents get safer without becoming useless. Put secrets behind a broker. Scope the broker. Log the broker. Rate-limit the broker. Add approvals where consequences get expensive.

The model can request action. The architecture decides how much authority reaches the outside world.

Agents cannot leak keys they never see. Build that sentence into the system.