AI Vision Is a Game Mechanic Now

There’s a category of game that couldn’t have shipped two years ago. Not because the hardware didn’t exist, or the game design theory wasn’t there, or the players weren’t ready. The core mechanic was impossible. It required a machine that could look at a picture, understand a natural language question about it, and answer accurately — in real time, at scale. That machine now exists. And it unlocks game designs that no one has explored yet.

The Mechanic That Didn’t Exist

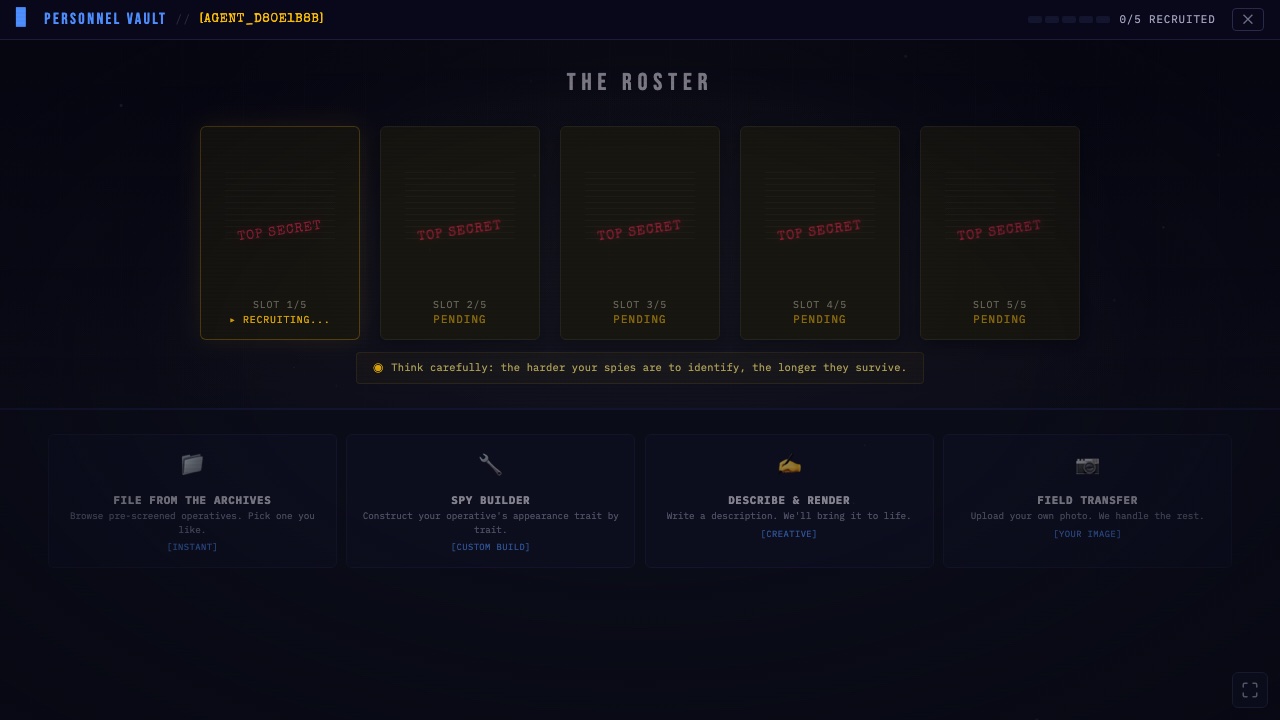

I recently built Spy Hunter, a multiplayer deduction game for a game jam. The core loop: 25 suspects on screen, one is a hidden spy, and you get six natural language questions to find them.

“Does the spy have a beard?” “Is the spy wearing a necklace?” “Does the spy look like they’re in their twenties?”

A vision model looks at the spy’s photo and answers each question. Not from a database of pre-tagged attributes. Not from a fixed decision tree. The AI examines the image and reasons about the answer.

This mechanic is simple to explain, intuitive to play, and was completely impossible before 2024.

Why It Was Impossible

Pre-AI, deduction games had a hard constraint: every queryable attribute had to be manually defined and tagged.

Classic Guess Who works because there are exactly 24 characters with exactly 5 binary attributes each. The game space is small and fully enumerated. Every question maps to a known attribute. Every answer is deterministic.

What happens when you want players to create their own characters? When a spy can be anything — a photograph, a painting, a dog, a cardboard box? When players can ask any question in natural language?

You can’t pre-tag that. You can’t build a decision tree for infinite possibilities. You can’t write rules for questions you haven’t imagined yet.

You need a system that can see and reason. That’s what vision models do.

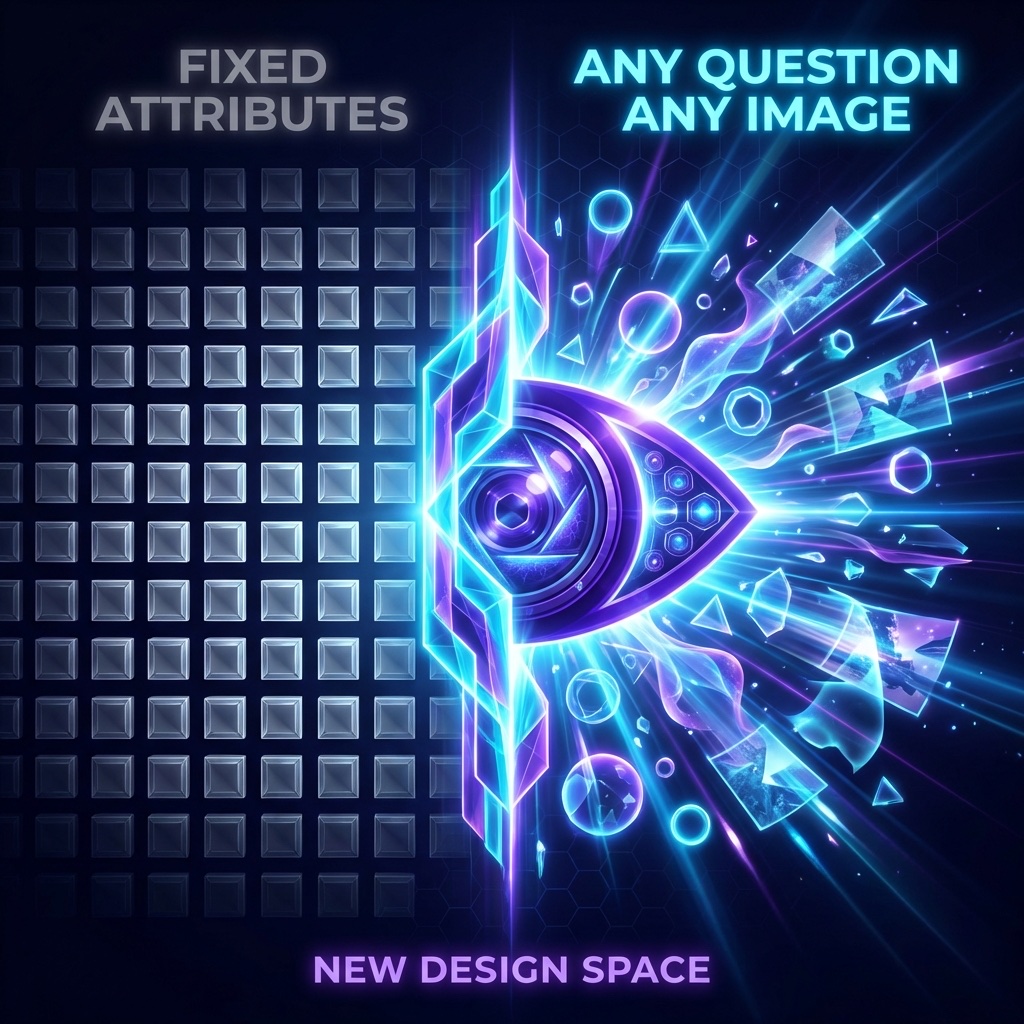

The Design Space This Opens

Spy Hunter is one game in a design space that barely exists yet. Consider what becomes possible when your game engine can:

See and describe images in natural language. Any player-submitted content becomes queryable. A game where you identify paintings. A game where you spot differences in photos. A game where you evaluate whether a scene matches a description.

Answer subjective questions about visual content. “Does this character look trustworthy?” “Is this landscape peaceful or ominous?” “Would this outfit blend in at a formal event?” Subjective judgment, at scale, as a game mechanic.

Generate visual content from descriptions. Players describe what they want, and AI renders it. In Spy Hunter, players can describe a spy and get a generated portrait. The creative input is language, not artistic skill.

These capabilities compose. A game where players describe a scene, AI generates it, other players interrogate it with questions, and the AI judges the answers — that’s three AI capabilities working together as a single gameplay loop.

The Player-Created Content Revolution

The most interesting thing about Spy Hunter’s design isn’t the AI answering questions. It’s what happens when you combine AI answering with unrestricted player creativity.

Players build teams of five spies. They can pick from archives, construct a face trait by trait, describe a character in plain text, or upload any image they want. A dog. A cartoon. A photo of a coffee mug with googly eyes. The system handles it all because the vision model doesn’t need to know what a “valid” spy looks like. It just needs to see whatever’s there and answer questions about it.

This creates an emergent metagame. Players study which questions are common, then design spies that resist those questions. If most hunters ask about hair color, you build a spy whose hair is ambiguous. If hunters focus on accessories, you go minimal. The creative strategy evolves as the player base learns.

No game designer pre-planned these strategies. They emerge from the intersection of player creativity and AI capability.

What Game Designers Should Be Thinking About

The game industry is focused on AI for three things right now: NPC dialogue, procedural generation, and content creation pipelines. Those are all valid uses. But they’re incremental — making existing game types better or cheaper.

The bigger opportunity is game mechanics that are only possible with AI.

Vision-based deduction. Natural language negotiation scored by AI judges. Creative challenges where AI evaluates subjective quality. Collaborative storytelling where AI maintains consistency across player contributions. Games where the rules themselves are interpreted by a language model.

These aren’t better versions of existing games. They’re games that have no pre-AI equivalent. They’re a new design space.

Go Play Something Impossible

Two years ago, Spy Hunter couldn’t exist. The core mechanic — a machine that sees a picture, hears a question, and gives an accurate answer — wasn’t available at the quality and cost required for a game.

Now it is. And this is just one game in a space that’s wide open.

If you’re a game designer, start asking: what game mechanic would I build if I had an AI that could see, hear, read, and reason? The answer is your next project.

If you’re a player, come see what this feels like. Play Spy Hunter — and experience a game that couldn’t have existed before.